08-27-周三_17-09-29

This commit is contained in:

24

node_modules/concat-stream/LICENSE

generated

vendored

Normal file

24

node_modules/concat-stream/LICENSE

generated

vendored

Normal file

@@ -0,0 +1,24 @@

|

||||

The MIT License

|

||||

|

||||

Copyright (c) 2013 Max Ogden

|

||||

|

||||

Permission is hereby granted, free of charge,

|

||||

to any person obtaining a copy of this software and

|

||||

associated documentation files (the "Software"), to

|

||||

deal in the Software without restriction, including

|

||||

without limitation the rights to use, copy, modify,

|

||||

merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom

|

||||

the Software is furnished to do so,

|

||||

subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice

|

||||

shall be included in all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

|

||||

EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES

|

||||

OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT.

|

||||

IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR

|

||||

ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT,

|

||||

TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE

|

||||

SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

||||

136

node_modules/concat-stream/index.js

generated

vendored

Normal file

136

node_modules/concat-stream/index.js

generated

vendored

Normal file

@@ -0,0 +1,136 @@

|

||||

var Writable = require('readable-stream').Writable

|

||||

var inherits = require('inherits')

|

||||

|

||||

if (typeof Uint8Array === 'undefined') {

|

||||

var U8 = require('typedarray').Uint8Array

|

||||

} else {

|

||||

var U8 = Uint8Array

|

||||

}

|

||||

|

||||

function ConcatStream(opts, cb) {

|

||||

if (!(this instanceof ConcatStream)) return new ConcatStream(opts, cb)

|

||||

|

||||

if (typeof opts === 'function') {

|

||||

cb = opts

|

||||

opts = {}

|

||||

}

|

||||

if (!opts) opts = {}

|

||||

|

||||

var encoding = opts.encoding

|

||||

var shouldInferEncoding = false

|

||||

|

||||

if (!encoding) {

|

||||

shouldInferEncoding = true

|

||||

} else {

|

||||

encoding = String(encoding).toLowerCase()

|

||||

if (encoding === 'u8' || encoding === 'uint8') {

|

||||

encoding = 'uint8array'

|

||||

}

|

||||

}

|

||||

|

||||

Writable.call(this, { objectMode: true })

|

||||

|

||||

this.encoding = encoding

|

||||

this.shouldInferEncoding = shouldInferEncoding

|

||||

|

||||

if (cb) this.on('finish', function () { cb(this.getBody()) })

|

||||

this.body = []

|

||||

}

|

||||

|

||||

module.exports = ConcatStream

|

||||

inherits(ConcatStream, Writable)

|

||||

|

||||

ConcatStream.prototype._write = function(chunk, enc, next) {

|

||||

this.body.push(chunk)

|

||||

next()

|

||||

}

|

||||

|

||||

ConcatStream.prototype.inferEncoding = function (buff) {

|

||||

var firstBuffer = buff === undefined ? this.body[0] : buff;

|

||||

if (Buffer.isBuffer(firstBuffer)) return 'buffer'

|

||||

if (typeof Uint8Array !== 'undefined' && firstBuffer instanceof Uint8Array) return 'uint8array'

|

||||

if (Array.isArray(firstBuffer)) return 'array'

|

||||

if (typeof firstBuffer === 'string') return 'string'

|

||||

if (Object.prototype.toString.call(firstBuffer) === "[object Object]") return 'object'

|

||||

return 'buffer'

|

||||

}

|

||||

|

||||

ConcatStream.prototype.getBody = function () {

|

||||

if (!this.encoding && this.body.length === 0) return []

|

||||

if (this.shouldInferEncoding) this.encoding = this.inferEncoding()

|

||||

if (this.encoding === 'array') return arrayConcat(this.body)

|

||||

if (this.encoding === 'string') return stringConcat(this.body)

|

||||

if (this.encoding === 'buffer') return bufferConcat(this.body)

|

||||

if (this.encoding === 'uint8array') return u8Concat(this.body)

|

||||

return this.body

|

||||

}

|

||||

|

||||

var isArray = Array.isArray || function (arr) {

|

||||

return Object.prototype.toString.call(arr) == '[object Array]'

|

||||

}

|

||||

|

||||

function isArrayish (arr) {

|

||||

return /Array\]$/.test(Object.prototype.toString.call(arr))

|

||||

}

|

||||

|

||||

function stringConcat (parts) {

|

||||

var strings = []

|

||||

var needsToString = false

|

||||

for (var i = 0; i < parts.length; i++) {

|

||||

var p = parts[i]

|

||||

if (typeof p === 'string') {

|

||||

strings.push(p)

|

||||

} else if (Buffer.isBuffer(p)) {

|

||||

strings.push(p)

|

||||

} else {

|

||||

strings.push(Buffer(p))

|

||||

}

|

||||

}

|

||||

if (Buffer.isBuffer(parts[0])) {

|

||||

strings = Buffer.concat(strings)

|

||||

strings = strings.toString('utf8')

|

||||

} else {

|

||||

strings = strings.join('')

|

||||

}

|

||||

return strings

|

||||

}

|

||||

|

||||

function bufferConcat (parts) {

|

||||

var bufs = []

|

||||

for (var i = 0; i < parts.length; i++) {

|

||||

var p = parts[i]

|

||||

if (Buffer.isBuffer(p)) {

|

||||

bufs.push(p)

|

||||

} else if (typeof p === 'string' || isArrayish(p)

|

||||

|| (p && typeof p.subarray === 'function')) {

|

||||

bufs.push(Buffer(p))

|

||||

} else bufs.push(Buffer(String(p)))

|

||||

}

|

||||

return Buffer.concat(bufs)

|

||||

}

|

||||

|

||||

function arrayConcat (parts) {

|

||||

var res = []

|

||||

for (var i = 0; i < parts.length; i++) {

|

||||

res.push.apply(res, parts[i])

|

||||

}

|

||||

return res

|

||||

}

|

||||

|

||||

function u8Concat (parts) {

|

||||

var len = 0

|

||||

for (var i = 0; i < parts.length; i++) {

|

||||

if (typeof parts[i] === 'string') {

|

||||

parts[i] = Buffer(parts[i])

|

||||

}

|

||||

len += parts[i].length

|

||||

}

|

||||

var u8 = new U8(len)

|

||||

for (var i = 0, offset = 0; i < parts.length; i++) {

|

||||

var part = parts[i]

|

||||

for (var j = 0; j < part.length; j++) {

|

||||

u8[offset++] = part[j]

|

||||

}

|

||||

}

|

||||

return u8

|

||||

}

|

||||

1

node_modules/concat-stream/node_modules/isarray/.npmignore

generated

vendored

Normal file

1

node_modules/concat-stream/node_modules/isarray/.npmignore

generated

vendored

Normal file

@@ -0,0 +1 @@

|

||||

node_modules

|

||||

4

node_modules/concat-stream/node_modules/isarray/.travis.yml

generated

vendored

Normal file

4

node_modules/concat-stream/node_modules/isarray/.travis.yml

generated

vendored

Normal file

@@ -0,0 +1,4 @@

|

||||

language: node_js

|

||||

node_js:

|

||||

- "0.8"

|

||||

- "0.10"

|

||||

6

node_modules/concat-stream/node_modules/isarray/Makefile

generated

vendored

Normal file

6

node_modules/concat-stream/node_modules/isarray/Makefile

generated

vendored

Normal file

@@ -0,0 +1,6 @@

|

||||

|

||||

test:

|

||||

@node_modules/.bin/tape test.js

|

||||

|

||||

.PHONY: test

|

||||

|

||||

60

node_modules/concat-stream/node_modules/isarray/README.md

generated

vendored

Normal file

60

node_modules/concat-stream/node_modules/isarray/README.md

generated

vendored

Normal file

@@ -0,0 +1,60 @@

|

||||

|

||||

# isarray

|

||||

|

||||

`Array#isArray` for older browsers.

|

||||

|

||||

[](http://travis-ci.org/juliangruber/isarray)

|

||||

[](https://www.npmjs.org/package/isarray)

|

||||

|

||||

[

|

||||

](https://ci.testling.com/juliangruber/isarray)

|

||||

|

||||

## Usage

|

||||

|

||||

```js

|

||||

var isArray = require('isarray');

|

||||

|

||||

console.log(isArray([])); // => true

|

||||

console.log(isArray({})); // => false

|

||||

```

|

||||

|

||||

## Installation

|

||||

|

||||

With [npm](http://npmjs.org) do

|

||||

|

||||

```bash

|

||||

$ npm install isarray

|

||||

```

|

||||

|

||||

Then bundle for the browser with

|

||||

[browserify](https://github.com/substack/browserify).

|

||||

|

||||

With [component](http://component.io) do

|

||||

|

||||

```bash

|

||||

$ component install juliangruber/isarray

|

||||

```

|

||||

|

||||

## License

|

||||

|

||||

(MIT)

|

||||

|

||||

Copyright (c) 2013 Julian Gruber <julian@juliangruber.com>

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy of

|

||||

this software and associated documentation files (the "Software"), to deal in

|

||||

the Software without restriction, including without limitation the rights to

|

||||

use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies

|

||||

of the Software, and to permit persons to whom the Software is furnished to do

|

||||

so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

19

node_modules/concat-stream/node_modules/isarray/component.json

generated

vendored

Normal file

19

node_modules/concat-stream/node_modules/isarray/component.json

generated

vendored

Normal file

@@ -0,0 +1,19 @@

|

||||

{

|

||||

"name" : "isarray",

|

||||

"description" : "Array#isArray for older browsers",

|

||||

"version" : "0.0.1",

|

||||

"repository" : "juliangruber/isarray",

|

||||

"homepage": "https://github.com/juliangruber/isarray",

|

||||

"main" : "index.js",

|

||||

"scripts" : [

|

||||

"index.js"

|

||||

],

|

||||

"dependencies" : {},

|

||||

"keywords": ["browser","isarray","array"],

|

||||

"author": {

|

||||

"name": "Julian Gruber",

|

||||

"email": "mail@juliangruber.com",

|

||||

"url": "http://juliangruber.com"

|

||||

},

|

||||

"license": "MIT"

|

||||

}

|

||||

5

node_modules/concat-stream/node_modules/isarray/index.js

generated

vendored

Normal file

5

node_modules/concat-stream/node_modules/isarray/index.js

generated

vendored

Normal file

@@ -0,0 +1,5 @@

|

||||

var toString = {}.toString;

|

||||

|

||||

module.exports = Array.isArray || function (arr) {

|

||||

return toString.call(arr) == '[object Array]';

|

||||

};

|

||||

110

node_modules/concat-stream/node_modules/isarray/package.json

generated

vendored

Normal file

110

node_modules/concat-stream/node_modules/isarray/package.json

generated

vendored

Normal file

@@ -0,0 +1,110 @@

|

||||

{

|

||||

"_args": [

|

||||

[

|

||||

{

|

||||

"name": "isarray",

|

||||

"raw": "isarray@~1.0.0",

|

||||

"rawSpec": "~1.0.0",

|

||||

"scope": null,

|

||||

"spec": ">=1.0.0 <1.1.0",

|

||||

"type": "range"

|

||||

},

|

||||

"/root/gitbook/node_modules/concat-stream/node_modules/readable-stream"

|

||||

]

|

||||

],

|

||||

"_from": "isarray@>=1.0.0 <1.1.0",

|

||||

"_id": "isarray@1.0.0",

|

||||

"_inCache": true,

|

||||

"_installable": true,

|

||||

"_location": "/concat-stream/isarray",

|

||||

"_nodeVersion": "5.1.0",

|

||||

"_npmUser": {

|

||||

"email": "julian@juliangruber.com",

|

||||

"name": "juliangruber"

|

||||

},

|

||||

"_npmVersion": "3.3.12",

|

||||

"_phantomChildren": {},

|

||||

"_requested": {

|

||||

"name": "isarray",

|

||||

"raw": "isarray@~1.0.0",

|

||||

"rawSpec": "~1.0.0",

|

||||

"scope": null,

|

||||

"spec": ">=1.0.0 <1.1.0",

|

||||

"type": "range"

|

||||

},

|

||||

"_requiredBy": [

|

||||

"/concat-stream/readable-stream"

|

||||

],

|

||||

"_resolved": "https://registry.npmjs.org/isarray/-/isarray-1.0.0.tgz",

|

||||

"_shasum": "bb935d48582cba168c06834957a54a3e07124f11",

|

||||

"_shrinkwrap": null,

|

||||

"_spec": "isarray@~1.0.0",

|

||||

"_where": "/root/gitbook/node_modules/concat-stream/node_modules/readable-stream",

|

||||

"author": {

|

||||

"email": "mail@juliangruber.com",

|

||||

"name": "Julian Gruber",

|

||||

"url": "http://juliangruber.com"

|

||||

},

|

||||

"bugs": {

|

||||

"url": "https://github.com/juliangruber/isarray/issues"

|

||||

},

|

||||

"dependencies": {},

|

||||

"description": "Array#isArray for older browsers",

|

||||

"devDependencies": {

|

||||

"tape": "~2.13.4"

|

||||

},

|

||||

"directories": {},

|

||||

"dist": {

|

||||

"integrity": "sha512-VLghIWNM6ELQzo7zwmcg0NmTVyWKYjvIeM83yjp0wRDTmUnrM678fQbcKBo6n2CJEF0szoG//ytg+TKla89ALQ==",

|

||||

"shasum": "bb935d48582cba168c06834957a54a3e07124f11",

|

||||

"signatures": [

|

||||

{

|

||||

"keyid": "SHA256:jl3bwswu80PjjokCgh0o2w5c2U4LhQAE57gj9cz1kzA",

|

||||

"sig": "MEYCIQDAUFha3cw0zrMmy/gRN+em7pQTK/Th9NvZu629sSQJEQIhAOA18G6lEgwD6Vu7/NsqFDjSDBx0WIS23RbPOBWbtgx+"

|

||||

}

|

||||

],

|

||||

"tarball": "https://registry.npmjs.org/isarray/-/isarray-1.0.0.tgz"

|

||||

},

|

||||

"gitHead": "2a23a281f369e9ae06394c0fb4d2381355a6ba33",

|

||||

"homepage": "https://github.com/juliangruber/isarray",

|

||||

"keywords": [

|

||||

"browser",

|

||||

"isarray",

|

||||

"array"

|

||||

],

|

||||

"license": "MIT",

|

||||

"main": "index.js",

|

||||

"maintainers": [

|

||||

{

|

||||

"email": "julian@juliangruber.com",

|

||||

"name": "juliangruber"

|

||||

}

|

||||

],

|

||||

"name": "isarray",

|

||||

"optionalDependencies": {},

|

||||

"readme": "ERROR: No README data found!",

|

||||

"repository": {

|

||||

"type": "git",

|

||||

"url": "git://github.com/juliangruber/isarray.git"

|

||||

},

|

||||

"scripts": {

|

||||

"test": "tape test.js"

|

||||

},

|

||||

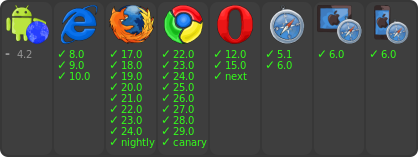

"testling": {

|

||||

"browsers": [

|

||||

"ie/8..latest",

|

||||

"firefox/17..latest",

|

||||

"firefox/nightly",

|

||||

"chrome/22..latest",

|

||||

"chrome/canary",

|

||||

"opera/12..latest",

|

||||

"opera/next",

|

||||

"safari/5.1..latest",

|

||||

"ipad/6.0..latest",

|

||||

"iphone/6.0..latest",

|

||||

"android-browser/4.2..latest"

|

||||

],

|

||||

"files": "test.js"

|

||||

},

|

||||

"version": "1.0.0"

|

||||

}

|

||||

20

node_modules/concat-stream/node_modules/isarray/test.js

generated

vendored

Normal file

20

node_modules/concat-stream/node_modules/isarray/test.js

generated

vendored

Normal file

@@ -0,0 +1,20 @@

|

||||

var isArray = require('./');

|

||||

var test = require('tape');

|

||||

|

||||

test('is array', function(t){

|

||||

t.ok(isArray([]));

|

||||

t.notOk(isArray({}));

|

||||

t.notOk(isArray(null));

|

||||

t.notOk(isArray(false));

|

||||

|

||||

var obj = {};

|

||||

obj[0] = true;

|

||||

t.notOk(isArray(obj));

|

||||

|

||||

var arr = [];

|

||||

arr.foo = 'bar';

|

||||

t.ok(isArray(arr));

|

||||

|

||||

t.end();

|

||||

});

|

||||

|

||||

5

node_modules/concat-stream/node_modules/readable-stream/.npmignore

generated

vendored

Normal file

5

node_modules/concat-stream/node_modules/readable-stream/.npmignore

generated

vendored

Normal file

@@ -0,0 +1,5 @@

|

||||

build/

|

||||

test/

|

||||

examples/

|

||||

fs.js

|

||||

zlib.js

|

||||

52

node_modules/concat-stream/node_modules/readable-stream/.travis.yml

generated

vendored

Normal file

52

node_modules/concat-stream/node_modules/readable-stream/.travis.yml

generated

vendored

Normal file

@@ -0,0 +1,52 @@

|

||||

sudo: false

|

||||

language: node_js

|

||||

before_install:

|

||||

- npm install -g npm@2

|

||||

- npm install -g npm

|

||||

notifications:

|

||||

email: false

|

||||

matrix:

|

||||

fast_finish: true

|

||||

allow_failures:

|

||||

- env: TASK=browser BROWSER_NAME=ipad BROWSER_VERSION="6.0..latest"

|

||||

- env: TASK=browser BROWSER_NAME=iphone BROWSER_VERSION="6.0..latest"

|

||||

include:

|

||||

- node_js: '0.8'

|

||||

env: TASK=test

|

||||

- node_js: '0.10'

|

||||

env: TASK=test

|

||||

- node_js: '0.11'

|

||||

env: TASK=test

|

||||

- node_js: '0.12'

|

||||

env: TASK=test

|

||||

- node_js: 1

|

||||

env: TASK=test

|

||||

- node_js: 2

|

||||

env: TASK=test

|

||||

- node_js: 3

|

||||

env: TASK=test

|

||||

- node_js: 4

|

||||

env: TASK=test

|

||||

- node_js: 5

|

||||

env: TASK=test

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=android BROWSER_VERSION="4.0..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=ie BROWSER_VERSION="9..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=opera BROWSER_VERSION="11..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=chrome BROWSER_VERSION="-3..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=firefox BROWSER_VERSION="-3..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=ipad BROWSER_VERSION="6.0..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=iphone BROWSER_VERSION="6.0..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=safari BROWSER_VERSION="5..latest"

|

||||

script: "npm run $TASK"

|

||||

env:

|

||||

global:

|

||||

- secure: rE2Vvo7vnjabYNULNyLFxOyt98BoJexDqsiOnfiD6kLYYsiQGfr/sbZkPMOFm9qfQG7pjqx+zZWZjGSswhTt+626C0t/njXqug7Yps4c3dFblzGfreQHp7wNX5TFsvrxd6dAowVasMp61sJcRnB2w8cUzoe3RAYUDHyiHktwqMc=

|

||||

- secure: g9YINaKAdMatsJ28G9jCGbSaguXCyxSTy+pBO6Ch0Cf57ZLOTka3HqDj8p3nV28LUIHZ3ut5WO43CeYKwt4AUtLpBS3a0dndHdY6D83uY6b2qh5hXlrcbeQTq2cvw2y95F7hm4D1kwrgZ7ViqaKggRcEupAL69YbJnxeUDKWEdI=

|

||||

1

node_modules/concat-stream/node_modules/readable-stream/.zuul.yml

generated

vendored

Normal file

1

node_modules/concat-stream/node_modules/readable-stream/.zuul.yml

generated

vendored

Normal file

@@ -0,0 +1 @@

|

||||

ui: tape

|

||||

18

node_modules/concat-stream/node_modules/readable-stream/LICENSE

generated

vendored

Normal file

18

node_modules/concat-stream/node_modules/readable-stream/LICENSE

generated

vendored

Normal file

@@ -0,0 +1,18 @@

|

||||

Copyright Joyent, Inc. and other Node contributors. All rights reserved.

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to

|

||||

deal in the Software without restriction, including without limitation the

|

||||

rights to use, copy, modify, merge, publish, distribute, sublicense, and/or

|

||||

sell copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in

|

||||

all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

|

||||

FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS

|

||||

IN THE SOFTWARE.

|

||||

36

node_modules/concat-stream/node_modules/readable-stream/README.md

generated

vendored

Normal file

36

node_modules/concat-stream/node_modules/readable-stream/README.md

generated

vendored

Normal file

@@ -0,0 +1,36 @@

|

||||

# readable-stream

|

||||

|

||||

***Node-core v5.8.0 streams for userland*** [](https://travis-ci.org/nodejs/readable-stream)

|

||||

|

||||

|

||||

[](https://nodei.co/npm/readable-stream/)

|

||||

[](https://nodei.co/npm/readable-stream/)

|

||||

|

||||

|

||||

[](https://saucelabs.com/u/readable-stream)

|

||||

|

||||

```bash

|

||||

npm install --save readable-stream

|

||||

```

|

||||

|

||||

***Node-core streams for userland***

|

||||

|

||||

This package is a mirror of the Streams2 and Streams3 implementations in

|

||||

Node-core, including [documentation](doc/stream.markdown).

|

||||

|

||||

If you want to guarantee a stable streams base, regardless of what version of

|

||||

Node you, or the users of your libraries are using, use **readable-stream** *only* and avoid the *"stream"* module in Node-core, for background see [this blogpost](http://r.va.gg/2014/06/why-i-dont-use-nodes-core-stream-module.html).

|

||||

|

||||

As of version 2.0.0 **readable-stream** uses semantic versioning.

|

||||

|

||||

# Streams WG Team Members

|

||||

|

||||

* **Chris Dickinson** ([@chrisdickinson](https://github.com/chrisdickinson)) <christopher.s.dickinson@gmail.com>

|

||||

- Release GPG key: 9554F04D7259F04124DE6B476D5A82AC7E37093B

|

||||

* **Calvin Metcalf** ([@calvinmetcalf](https://github.com/calvinmetcalf)) <calvin.metcalf@gmail.com>

|

||||

- Release GPG key: F3EF5F62A87FC27A22E643F714CE4FF5015AA242

|

||||

* **Rod Vagg** ([@rvagg](https://github.com/rvagg)) <rod@vagg.org>

|

||||

- Release GPG key: DD8F2338BAE7501E3DD5AC78C273792F7D83545D

|

||||

* **Sam Newman** ([@sonewman](https://github.com/sonewman)) <newmansam@outlook.com>

|

||||

* **Mathias Buus** ([@mafintosh](https://github.com/mafintosh)) <mathiasbuus@gmail.com>

|

||||

* **Domenic Denicola** ([@domenic](https://github.com/domenic)) <d@domenic.me>

|

||||

1760

node_modules/concat-stream/node_modules/readable-stream/doc/stream.markdown

generated

vendored

Normal file

1760

node_modules/concat-stream/node_modules/readable-stream/doc/stream.markdown

generated

vendored

Normal file

File diff suppressed because it is too large

Load Diff

60

node_modules/concat-stream/node_modules/readable-stream/doc/wg-meetings/2015-01-30.md

generated

vendored

Normal file

60

node_modules/concat-stream/node_modules/readable-stream/doc/wg-meetings/2015-01-30.md

generated

vendored

Normal file

@@ -0,0 +1,60 @@

|

||||

# streams WG Meeting 2015-01-30

|

||||

|

||||

## Links

|

||||

|

||||

* **Google Hangouts Video**: http://www.youtube.com/watch?v=I9nDOSGfwZg

|

||||

* **GitHub Issue**: https://github.com/iojs/readable-stream/issues/106

|

||||

* **Original Minutes Google Doc**: https://docs.google.com/document/d/17aTgLnjMXIrfjgNaTUnHQO7m3xgzHR2VXBTmi03Qii4/

|

||||

|

||||

## Agenda

|

||||

|

||||

Extracted from https://github.com/iojs/readable-stream/labels/wg-agenda prior to meeting.

|

||||

|

||||

* adopt a charter [#105](https://github.com/iojs/readable-stream/issues/105)

|

||||

* release and versioning strategy [#101](https://github.com/iojs/readable-stream/issues/101)

|

||||

* simpler stream creation [#102](https://github.com/iojs/readable-stream/issues/102)

|

||||

* proposal: deprecate implicit flowing of streams [#99](https://github.com/iojs/readable-stream/issues/99)

|

||||

|

||||

## Minutes

|

||||

|

||||

### adopt a charter

|

||||

|

||||

* group: +1's all around

|

||||

|

||||

### What versioning scheme should be adopted?

|

||||

* group: +1’s 3.0.0

|

||||

* domenic+group: pulling in patches from other sources where appropriate

|

||||

* mikeal: version independently, suggesting versions for io.js

|

||||

* mikeal+domenic: work with TC to notify in advance of changes

|

||||

simpler stream creation

|

||||

|

||||

### streamline creation of streams

|

||||

* sam: streamline creation of streams

|

||||

* domenic: nice simple solution posted

|

||||

but, we lose the opportunity to change the model

|

||||

may not be backwards incompatible (double check keys)

|

||||

|

||||

**action item:** domenic will check

|

||||

|

||||

### remove implicit flowing of streams on(‘data’)

|

||||

* add isFlowing / isPaused

|

||||

* mikeal: worrying that we’re documenting polyfill methods – confuses users

|

||||

* domenic: more reflective API is probably good, with warning labels for users

|

||||

* new section for mad scientists (reflective stream access)

|

||||

* calvin: name the “third state”

|

||||

* mikeal: maybe borrow the name from whatwg?

|

||||

* domenic: we’re missing the “third state”

|

||||

* consensus: kind of difficult to name the third state

|

||||

* mikeal: figure out differences in states / compat

|

||||

* mathias: always flow on data – eliminates third state

|

||||

* explore what it breaks

|

||||

|

||||

**action items:**

|

||||

* ask isaac for ability to list packages by what public io.js APIs they use (esp. Stream)

|

||||

* ask rod/build for infrastructure

|

||||

* **chris**: explore the “flow on data” approach

|

||||

* add isPaused/isFlowing

|

||||

* add new docs section

|

||||

* move isPaused to that section

|

||||

|

||||

|

||||

1

node_modules/concat-stream/node_modules/readable-stream/duplex.js

generated

vendored

Normal file

1

node_modules/concat-stream/node_modules/readable-stream/duplex.js

generated

vendored

Normal file

@@ -0,0 +1 @@

|

||||

module.exports = require("./lib/_stream_duplex.js")

|

||||

75

node_modules/concat-stream/node_modules/readable-stream/lib/_stream_duplex.js

generated

vendored

Normal file

75

node_modules/concat-stream/node_modules/readable-stream/lib/_stream_duplex.js

generated

vendored

Normal file

@@ -0,0 +1,75 @@

|

||||

// a duplex stream is just a stream that is both readable and writable.

|

||||

// Since JS doesn't have multiple prototypal inheritance, this class

|

||||

// prototypally inherits from Readable, and then parasitically from

|

||||

// Writable.

|

||||

|

||||

'use strict';

|

||||

|

||||

/*<replacement>*/

|

||||

|

||||

var objectKeys = Object.keys || function (obj) {

|

||||

var keys = [];

|

||||

for (var key in obj) {

|

||||

keys.push(key);

|

||||

}return keys;

|

||||

};

|

||||

/*</replacement>*/

|

||||

|

||||

module.exports = Duplex;

|

||||

|

||||

/*<replacement>*/

|

||||

var processNextTick = require('process-nextick-args');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var util = require('core-util-is');

|

||||

util.inherits = require('inherits');

|

||||

/*</replacement>*/

|

||||

|

||||

var Readable = require('./_stream_readable');

|

||||

var Writable = require('./_stream_writable');

|

||||

|

||||

util.inherits(Duplex, Readable);

|

||||

|

||||

var keys = objectKeys(Writable.prototype);

|

||||

for (var v = 0; v < keys.length; v++) {

|

||||

var method = keys[v];

|

||||

if (!Duplex.prototype[method]) Duplex.prototype[method] = Writable.prototype[method];

|

||||

}

|

||||

|

||||

function Duplex(options) {

|

||||

if (!(this instanceof Duplex)) return new Duplex(options);

|

||||

|

||||

Readable.call(this, options);

|

||||

Writable.call(this, options);

|

||||

|

||||

if (options && options.readable === false) this.readable = false;

|

||||

|

||||

if (options && options.writable === false) this.writable = false;

|

||||

|

||||

this.allowHalfOpen = true;

|

||||

if (options && options.allowHalfOpen === false) this.allowHalfOpen = false;

|

||||

|

||||

this.once('end', onend);

|

||||

}

|

||||

|

||||

// the no-half-open enforcer

|

||||

function onend() {

|

||||

// if we allow half-open state, or if the writable side ended,

|

||||

// then we're ok.

|

||||

if (this.allowHalfOpen || this._writableState.ended) return;

|

||||

|

||||

// no more data can be written.

|

||||

// But allow more writes to happen in this tick.

|

||||

processNextTick(onEndNT, this);

|

||||

}

|

||||

|

||||

function onEndNT(self) {

|

||||

self.end();

|

||||

}

|

||||

|

||||

function forEach(xs, f) {

|

||||

for (var i = 0, l = xs.length; i < l; i++) {

|

||||

f(xs[i], i);

|

||||

}

|

||||

}

|

||||

26

node_modules/concat-stream/node_modules/readable-stream/lib/_stream_passthrough.js

generated

vendored

Normal file

26

node_modules/concat-stream/node_modules/readable-stream/lib/_stream_passthrough.js

generated

vendored

Normal file

@@ -0,0 +1,26 @@

|

||||

// a passthrough stream.

|

||||

// basically just the most minimal sort of Transform stream.

|

||||

// Every written chunk gets output as-is.

|

||||

|

||||

'use strict';

|

||||

|

||||

module.exports = PassThrough;

|

||||

|

||||

var Transform = require('./_stream_transform');

|

||||

|

||||

/*<replacement>*/

|

||||

var util = require('core-util-is');

|

||||

util.inherits = require('inherits');

|

||||

/*</replacement>*/

|

||||

|

||||

util.inherits(PassThrough, Transform);

|

||||

|

||||

function PassThrough(options) {

|

||||

if (!(this instanceof PassThrough)) return new PassThrough(options);

|

||||

|

||||

Transform.call(this, options);

|

||||

}

|

||||

|

||||

PassThrough.prototype._transform = function (chunk, encoding, cb) {

|

||||

cb(null, chunk);

|

||||

};

|

||||

880

node_modules/concat-stream/node_modules/readable-stream/lib/_stream_readable.js

generated

vendored

Normal file

880

node_modules/concat-stream/node_modules/readable-stream/lib/_stream_readable.js

generated

vendored

Normal file

@@ -0,0 +1,880 @@

|

||||

'use strict';

|

||||

|

||||

module.exports = Readable;

|

||||

|

||||

/*<replacement>*/

|

||||

var processNextTick = require('process-nextick-args');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var isArray = require('isarray');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var Buffer = require('buffer').Buffer;

|

||||

/*</replacement>*/

|

||||

|

||||

Readable.ReadableState = ReadableState;

|

||||

|

||||

var EE = require('events');

|

||||

|

||||

/*<replacement>*/

|

||||

var EElistenerCount = function (emitter, type) {

|

||||

return emitter.listeners(type).length;

|

||||

};

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var Stream;

|

||||

(function () {

|

||||

try {

|

||||

Stream = require('st' + 'ream');

|

||||

} catch (_) {} finally {

|

||||

if (!Stream) Stream = require('events').EventEmitter;

|

||||

}

|

||||

})();

|

||||

/*</replacement>*/

|

||||

|

||||

var Buffer = require('buffer').Buffer;

|

||||

|

||||

/*<replacement>*/

|

||||

var util = require('core-util-is');

|

||||

util.inherits = require('inherits');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var debugUtil = require('util');

|

||||

var debug = undefined;

|

||||

if (debugUtil && debugUtil.debuglog) {

|

||||

debug = debugUtil.debuglog('stream');

|

||||

} else {

|

||||

debug = function () {};

|

||||

}

|

||||

/*</replacement>*/

|

||||

|

||||

var StringDecoder;

|

||||

|

||||

util.inherits(Readable, Stream);

|

||||

|

||||

var Duplex;

|

||||

function ReadableState(options, stream) {

|

||||

Duplex = Duplex || require('./_stream_duplex');

|

||||

|

||||

options = options || {};

|

||||

|

||||

// object stream flag. Used to make read(n) ignore n and to

|

||||

// make all the buffer merging and length checks go away

|

||||

this.objectMode = !!options.objectMode;

|

||||

|

||||

if (stream instanceof Duplex) this.objectMode = this.objectMode || !!options.readableObjectMode;

|

||||

|

||||

// the point at which it stops calling _read() to fill the buffer

|

||||

// Note: 0 is a valid value, means "don't call _read preemptively ever"

|

||||

var hwm = options.highWaterMark;

|

||||

var defaultHwm = this.objectMode ? 16 : 16 * 1024;

|

||||

this.highWaterMark = hwm || hwm === 0 ? hwm : defaultHwm;

|

||||

|

||||

// cast to ints.

|

||||

this.highWaterMark = ~ ~this.highWaterMark;

|

||||

|

||||

this.buffer = [];

|

||||

this.length = 0;

|

||||

this.pipes = null;

|

||||

this.pipesCount = 0;

|

||||

this.flowing = null;

|

||||

this.ended = false;

|

||||

this.endEmitted = false;

|

||||

this.reading = false;

|

||||

|

||||

// a flag to be able to tell if the onwrite cb is called immediately,

|

||||

// or on a later tick. We set this to true at first, because any

|

||||

// actions that shouldn't happen until "later" should generally also

|

||||

// not happen before the first write call.

|

||||

this.sync = true;

|

||||

|

||||

// whenever we return null, then we set a flag to say

|

||||

// that we're awaiting a 'readable' event emission.

|

||||

this.needReadable = false;

|

||||

this.emittedReadable = false;

|

||||

this.readableListening = false;

|

||||

this.resumeScheduled = false;

|

||||

|

||||

// Crypto is kind of old and crusty. Historically, its default string

|

||||

// encoding is 'binary' so we have to make this configurable.

|

||||

// Everything else in the universe uses 'utf8', though.

|

||||

this.defaultEncoding = options.defaultEncoding || 'utf8';

|

||||

|

||||

// when piping, we only care about 'readable' events that happen

|

||||

// after read()ing all the bytes and not getting any pushback.

|

||||

this.ranOut = false;

|

||||

|

||||

// the number of writers that are awaiting a drain event in .pipe()s

|

||||

this.awaitDrain = 0;

|

||||

|

||||

// if true, a maybeReadMore has been scheduled

|

||||

this.readingMore = false;

|

||||

|

||||

this.decoder = null;

|

||||

this.encoding = null;

|

||||

if (options.encoding) {

|

||||

if (!StringDecoder) StringDecoder = require('string_decoder/').StringDecoder;

|

||||

this.decoder = new StringDecoder(options.encoding);

|

||||

this.encoding = options.encoding;

|

||||

}

|

||||

}

|

||||

|

||||

var Duplex;

|

||||

function Readable(options) {

|

||||

Duplex = Duplex || require('./_stream_duplex');

|

||||

|

||||

if (!(this instanceof Readable)) return new Readable(options);

|

||||

|

||||

this._readableState = new ReadableState(options, this);

|

||||

|

||||

// legacy

|

||||

this.readable = true;

|

||||

|

||||

if (options && typeof options.read === 'function') this._read = options.read;

|

||||

|

||||

Stream.call(this);

|

||||

}

|

||||

|

||||

// Manually shove something into the read() buffer.

|

||||

// This returns true if the highWaterMark has not been hit yet,

|

||||

// similar to how Writable.write() returns true if you should

|

||||

// write() some more.

|

||||

Readable.prototype.push = function (chunk, encoding) {

|

||||

var state = this._readableState;

|

||||

|

||||

if (!state.objectMode && typeof chunk === 'string') {

|

||||

encoding = encoding || state.defaultEncoding;

|

||||

if (encoding !== state.encoding) {

|

||||

chunk = new Buffer(chunk, encoding);

|

||||

encoding = '';

|

||||

}

|

||||

}

|

||||

|

||||

return readableAddChunk(this, state, chunk, encoding, false);

|

||||

};

|

||||

|

||||

// Unshift should *always* be something directly out of read()

|

||||

Readable.prototype.unshift = function (chunk) {

|

||||

var state = this._readableState;

|

||||

return readableAddChunk(this, state, chunk, '', true);

|

||||

};

|

||||

|

||||

Readable.prototype.isPaused = function () {

|

||||

return this._readableState.flowing === false;

|

||||

};

|

||||

|

||||

function readableAddChunk(stream, state, chunk, encoding, addToFront) {

|

||||

var er = chunkInvalid(state, chunk);

|

||||

if (er) {

|

||||

stream.emit('error', er);

|

||||

} else if (chunk === null) {

|

||||

state.reading = false;

|

||||

onEofChunk(stream, state);

|

||||

} else if (state.objectMode || chunk && chunk.length > 0) {

|

||||

if (state.ended && !addToFront) {

|

||||

var e = new Error('stream.push() after EOF');

|

||||

stream.emit('error', e);

|

||||

} else if (state.endEmitted && addToFront) {

|

||||

var e = new Error('stream.unshift() after end event');

|

||||

stream.emit('error', e);

|

||||

} else {

|

||||

var skipAdd;

|

||||

if (state.decoder && !addToFront && !encoding) {

|

||||

chunk = state.decoder.write(chunk);

|

||||

skipAdd = !state.objectMode && chunk.length === 0;

|

||||

}

|

||||

|

||||

if (!addToFront) state.reading = false;

|

||||

|

||||

// Don't add to the buffer if we've decoded to an empty string chunk and

|

||||

// we're not in object mode

|

||||

if (!skipAdd) {

|

||||

// if we want the data now, just emit it.

|

||||

if (state.flowing && state.length === 0 && !state.sync) {

|

||||

stream.emit('data', chunk);

|

||||

stream.read(0);

|

||||

} else {

|

||||

// update the buffer info.

|

||||

state.length += state.objectMode ? 1 : chunk.length;

|

||||

if (addToFront) state.buffer.unshift(chunk);else state.buffer.push(chunk);

|

||||

|

||||

if (state.needReadable) emitReadable(stream);

|

||||

}

|

||||

}

|

||||

|

||||

maybeReadMore(stream, state);

|

||||

}

|

||||

} else if (!addToFront) {

|

||||

state.reading = false;

|

||||

}

|

||||

|

||||

return needMoreData(state);

|

||||

}

|

||||

|

||||

// if it's past the high water mark, we can push in some more.

|

||||

// Also, if we have no data yet, we can stand some

|

||||

// more bytes. This is to work around cases where hwm=0,

|

||||

// such as the repl. Also, if the push() triggered a

|

||||

// readable event, and the user called read(largeNumber) such that

|

||||

// needReadable was set, then we ought to push more, so that another

|

||||

// 'readable' event will be triggered.

|

||||

function needMoreData(state) {

|

||||

return !state.ended && (state.needReadable || state.length < state.highWaterMark || state.length === 0);

|

||||

}

|

||||

|

||||

// backwards compatibility.

|

||||

Readable.prototype.setEncoding = function (enc) {

|

||||

if (!StringDecoder) StringDecoder = require('string_decoder/').StringDecoder;

|

||||

this._readableState.decoder = new StringDecoder(enc);

|

||||

this._readableState.encoding = enc;

|

||||

return this;

|

||||

};

|

||||

|

||||

// Don't raise the hwm > 8MB

|

||||

var MAX_HWM = 0x800000;

|

||||

function computeNewHighWaterMark(n) {

|

||||

if (n >= MAX_HWM) {

|

||||

n = MAX_HWM;

|

||||

} else {

|

||||

// Get the next highest power of 2

|

||||

n--;

|

||||

n |= n >>> 1;

|

||||

n |= n >>> 2;

|

||||

n |= n >>> 4;

|

||||

n |= n >>> 8;

|

||||

n |= n >>> 16;

|

||||

n++;

|

||||

}

|

||||

return n;

|

||||

}

|

||||

|

||||

function howMuchToRead(n, state) {

|

||||

if (state.length === 0 && state.ended) return 0;

|

||||

|

||||

if (state.objectMode) return n === 0 ? 0 : 1;

|

||||

|

||||

if (n === null || isNaN(n)) {

|

||||

// only flow one buffer at a time

|

||||

if (state.flowing && state.buffer.length) return state.buffer[0].length;else return state.length;

|

||||

}

|

||||

|

||||

if (n <= 0) return 0;

|

||||

|

||||

// If we're asking for more than the target buffer level,

|

||||

// then raise the water mark. Bump up to the next highest

|

||||

// power of 2, to prevent increasing it excessively in tiny

|

||||

// amounts.

|

||||

if (n > state.highWaterMark) state.highWaterMark = computeNewHighWaterMark(n);

|

||||

|

||||

// don't have that much. return null, unless we've ended.

|

||||

if (n > state.length) {

|

||||

if (!state.ended) {

|

||||

state.needReadable = true;

|

||||

return 0;

|

||||

} else {

|

||||

return state.length;

|

||||

}

|

||||

}

|

||||

|

||||

return n;

|

||||

}

|

||||

|

||||

// you can override either this method, or the async _read(n) below.

|

||||

Readable.prototype.read = function (n) {

|

||||

debug('read', n);

|

||||

var state = this._readableState;

|

||||

var nOrig = n;

|

||||

|

||||

if (typeof n !== 'number' || n > 0) state.emittedReadable = false;

|

||||

|

||||

// if we're doing read(0) to trigger a readable event, but we

|

||||

// already have a bunch of data in the buffer, then just trigger

|

||||

// the 'readable' event and move on.

|

||||

if (n === 0 && state.needReadable && (state.length >= state.highWaterMark || state.ended)) {

|

||||

debug('read: emitReadable', state.length, state.ended);

|

||||

if (state.length === 0 && state.ended) endReadable(this);else emitReadable(this);

|

||||

return null;

|

||||

}

|

||||

|

||||

n = howMuchToRead(n, state);

|

||||

|

||||

// if we've ended, and we're now clear, then finish it up.

|

||||

if (n === 0 && state.ended) {

|

||||

if (state.length === 0) endReadable(this);

|

||||

return null;

|

||||

}

|

||||

|

||||

// All the actual chunk generation logic needs to be

|

||||

// *below* the call to _read. The reason is that in certain

|

||||

// synthetic stream cases, such as passthrough streams, _read

|

||||

// may be a completely synchronous operation which may change

|

||||

// the state of the read buffer, providing enough data when

|

||||

// before there was *not* enough.

|

||||

//

|

||||

// So, the steps are:

|

||||

// 1. Figure out what the state of things will be after we do

|

||||

// a read from the buffer.

|

||||

//

|

||||

// 2. If that resulting state will trigger a _read, then call _read.

|

||||

// Note that this may be asynchronous, or synchronous. Yes, it is

|

||||

// deeply ugly to write APIs this way, but that still doesn't mean

|

||||

// that the Readable class should behave improperly, as streams are

|

||||

// designed to be sync/async agnostic.

|

||||

// Take note if the _read call is sync or async (ie, if the read call

|

||||

// has returned yet), so that we know whether or not it's safe to emit

|

||||

// 'readable' etc.

|

||||

//

|

||||

// 3. Actually pull the requested chunks out of the buffer and return.

|

||||

|

||||

// if we need a readable event, then we need to do some reading.

|

||||

var doRead = state.needReadable;

|

||||

debug('need readable', doRead);

|

||||

|

||||

// if we currently have less than the highWaterMark, then also read some

|

||||

if (state.length === 0 || state.length - n < state.highWaterMark) {

|

||||

doRead = true;

|

||||

debug('length less than watermark', doRead);

|

||||

}

|

||||

|

||||

// however, if we've ended, then there's no point, and if we're already

|

||||

// reading, then it's unnecessary.

|

||||

if (state.ended || state.reading) {

|

||||

doRead = false;

|

||||

debug('reading or ended', doRead);

|

||||

}

|

||||

|

||||

if (doRead) {

|

||||

debug('do read');

|

||||

state.reading = true;

|

||||

state.sync = true;

|

||||

// if the length is currently zero, then we *need* a readable event.

|

||||

if (state.length === 0) state.needReadable = true;

|

||||

// call internal read method

|

||||

this._read(state.highWaterMark);

|

||||

state.sync = false;

|

||||

}

|

||||

|

||||

// If _read pushed data synchronously, then `reading` will be false,

|

||||

// and we need to re-evaluate how much data we can return to the user.

|

||||

if (doRead && !state.reading) n = howMuchToRead(nOrig, state);

|

||||

|

||||

var ret;

|

||||

if (n > 0) ret = fromList(n, state);else ret = null;

|

||||

|

||||

if (ret === null) {

|

||||

state.needReadable = true;

|

||||

n = 0;

|

||||

}

|

||||

|

||||

state.length -= n;

|

||||

|

||||

// If we have nothing in the buffer, then we want to know

|

||||

// as soon as we *do* get something into the buffer.

|

||||

if (state.length === 0 && !state.ended) state.needReadable = true;

|

||||

|

||||

// If we tried to read() past the EOF, then emit end on the next tick.

|

||||

if (nOrig !== n && state.ended && state.length === 0) endReadable(this);

|

||||

|

||||

if (ret !== null) this.emit('data', ret);

|

||||

|

||||

return ret;

|

||||

};

|

||||

|

||||

function chunkInvalid(state, chunk) {

|

||||

var er = null;

|

||||

if (!Buffer.isBuffer(chunk) && typeof chunk !== 'string' && chunk !== null && chunk !== undefined && !state.objectMode) {

|

||||

er = new TypeError('Invalid non-string/buffer chunk');

|

||||

}

|

||||

return er;

|

||||

}

|

||||

|

||||

function onEofChunk(stream, state) {

|

||||

if (state.ended) return;

|

||||

if (state.decoder) {

|

||||

var chunk = state.decoder.end();

|

||||

if (chunk && chunk.length) {

|

||||

state.buffer.push(chunk);

|

||||

state.length += state.objectMode ? 1 : chunk.length;

|

||||

}

|

||||

}

|

||||

state.ended = true;

|

||||

|

||||

// emit 'readable' now to make sure it gets picked up.

|

||||

emitReadable(stream);

|

||||

}

|

||||

|

||||

// Don't emit readable right away in sync mode, because this can trigger

|

||||

// another read() call => stack overflow. This way, it might trigger

|

||||

// a nextTick recursion warning, but that's not so bad.

|

||||

function emitReadable(stream) {

|

||||

var state = stream._readableState;

|

||||

state.needReadable = false;

|

||||

if (!state.emittedReadable) {

|

||||

debug('emitReadable', state.flowing);

|

||||

state.emittedReadable = true;

|

||||

if (state.sync) processNextTick(emitReadable_, stream);else emitReadable_(stream);

|

||||

}

|

||||

}

|

||||

|

||||

function emitReadable_(stream) {

|

||||

debug('emit readable');

|

||||

stream.emit('readable');

|

||||

flow(stream);

|

||||

}

|

||||

|

||||

// at this point, the user has presumably seen the 'readable' event,

|

||||

// and called read() to consume some data. that may have triggered

|

||||

// in turn another _read(n) call, in which case reading = true if

|

||||

// it's in progress.

|

||||

// However, if we're not ended, or reading, and the length < hwm,

|

||||

// then go ahead and try to read some more preemptively.

|

||||

function maybeReadMore(stream, state) {

|

||||

if (!state.readingMore) {

|

||||

state.readingMore = true;

|

||||

processNextTick(maybeReadMore_, stream, state);

|

||||

}

|

||||

}

|

||||

|

||||

function maybeReadMore_(stream, state) {

|

||||

var len = state.length;

|

||||

while (!state.reading && !state.flowing && !state.ended && state.length < state.highWaterMark) {

|

||||

debug('maybeReadMore read 0');

|

||||

stream.read(0);

|

||||

if (len === state.length)

|

||||

// didn't get any data, stop spinning.

|

||||

break;else len = state.length;

|

||||

}

|

||||

state.readingMore = false;

|

||||

}

|

||||

|

||||

// abstract method. to be overridden in specific implementation classes.

|

||||

// call cb(er, data) where data is <= n in length.

|

||||

// for virtual (non-string, non-buffer) streams, "length" is somewhat

|

||||

// arbitrary, and perhaps not very meaningful.

|

||||

Readable.prototype._read = function (n) {

|

||||

this.emit('error', new Error('not implemented'));

|

||||

};

|

||||

|

||||

Readable.prototype.pipe = function (dest, pipeOpts) {

|

||||

var src = this;

|

||||

var state = this._readableState;

|

||||

|

||||

switch (state.pipesCount) {

|

||||

case 0:

|

||||

state.pipes = dest;

|

||||

break;

|

||||

case 1:

|

||||

state.pipes = [state.pipes, dest];

|

||||

break;

|

||||

default:

|

||||

state.pipes.push(dest);

|

||||

break;

|

||||

}

|

||||

state.pipesCount += 1;

|

||||

debug('pipe count=%d opts=%j', state.pipesCount, pipeOpts);

|

||||

|

||||

var doEnd = (!pipeOpts || pipeOpts.end !== false) && dest !== process.stdout && dest !== process.stderr;

|

||||

|

||||

var endFn = doEnd ? onend : cleanup;

|

||||

if (state.endEmitted) processNextTick(endFn);else src.once('end', endFn);

|

||||

|

||||

dest.on('unpipe', onunpipe);

|

||||

function onunpipe(readable) {

|

||||

debug('onunpipe');

|

||||

if (readable === src) {

|

||||

cleanup();

|

||||

}

|

||||

}

|

||||

|

||||

function onend() {

|

||||

debug('onend');

|

||||

dest.end();

|

||||

}

|

||||

|

||||

// when the dest drains, it reduces the awaitDrain counter

|

||||

// on the source. This would be more elegant with a .once()

|

||||

// handler in flow(), but adding and removing repeatedly is

|

||||

// too slow.

|

||||

var ondrain = pipeOnDrain(src);

|

||||

dest.on('drain', ondrain);

|

||||

|

||||

var cleanedUp = false;

|

||||

function cleanup() {

|

||||

debug('cleanup');

|

||||

// cleanup event handlers once the pipe is broken

|

||||

dest.removeListener('close', onclose);

|

||||

dest.removeListener('finish', onfinish);

|

||||

dest.removeListener('drain', ondrain);

|

||||

dest.removeListener('error', onerror);

|

||||

dest.removeListener('unpipe', onunpipe);

|

||||

src.removeListener('end', onend);

|

||||

src.removeListener('end', cleanup);

|

||||

src.removeListener('data', ondata);

|

||||

|

||||

cleanedUp = true;

|

||||

|

||||

// if the reader is waiting for a drain event from this

|

||||

// specific writer, then it would cause it to never start

|

||||

// flowing again.

|

||||

// So, if this is awaiting a drain, then we just call it now.

|

||||

// If we don't know, then assume that we are waiting for one.

|

||||

if (state.awaitDrain && (!dest._writableState || dest._writableState.needDrain)) ondrain();

|

||||

}

|

||||

|

||||

src.on('data', ondata);

|

||||

function ondata(chunk) {

|

||||

debug('ondata');

|

||||

var ret = dest.write(chunk);

|

||||

if (false === ret) {

|

||||

// If the user unpiped during `dest.write()`, it is possible

|

||||

// to get stuck in a permanently paused state if that write

|

||||

// also returned false.

|

||||

if (state.pipesCount === 1 && state.pipes[0] === dest && src.listenerCount('data') === 1 && !cleanedUp) {

|

||||

debug('false write response, pause', src._readableState.awaitDrain);

|

||||

src._readableState.awaitDrain++;

|

||||

}

|

||||

src.pause();

|

||||

}

|

||||

}

|

||||

|

||||

// if the dest has an error, then stop piping into it.

|

||||

// however, don't suppress the throwing behavior for this.

|

||||

function onerror(er) {

|

||||

debug('onerror', er);

|

||||

unpipe();

|

||||

dest.removeListener('error', onerror);

|

||||

if (EElistenerCount(dest, 'error') === 0) dest.emit('error', er);

|

||||

}

|

||||

// This is a brutally ugly hack to make sure that our error handler

|

||||

// is attached before any userland ones. NEVER DO THIS.

|

||||

if (!dest._events || !dest._events.error) dest.on('error', onerror);else if (isArray(dest._events.error)) dest._events.error.unshift(onerror);else dest._events.error = [onerror, dest._events.error];

|

||||

|

||||

// Both close and finish should trigger unpipe, but only once.

|

||||

function onclose() {

|

||||

dest.removeListener('finish', onfinish);

|

||||

unpipe();

|

||||

}

|

||||

dest.once('close', onclose);

|

||||

function onfinish() {

|

||||

debug('onfinish');

|

||||

dest.removeListener('close', onclose);

|

||||

unpipe();

|

||||

}

|

||||

dest.once('finish', onfinish);

|

||||

|

||||

function unpipe() {

|

||||

debug('unpipe');

|

||||

src.unpipe(dest);

|

||||

}

|

||||

|

||||

// tell the dest that it's being piped to

|

||||

dest.emit('pipe', src);

|

||||

|

||||

// start the flow if it hasn't been started already.

|

||||

if (!state.flowing) {

|

||||

debug('pipe resume');

|

||||

src.resume();

|

||||

}

|

||||

|

||||

return dest;

|

||||

};

|

||||

|

||||

function pipeOnDrain(src) {

|

||||

return function () {

|

||||

var state = src._readableState;

|

||||

debug('pipeOnDrain', state.awaitDrain);

|

||||

if (state.awaitDrain) state.awaitDrain--;

|

||||

if (state.awaitDrain === 0 && EElistenerCount(src, 'data')) {

|

||||

state.flowing = true;

|

||||

flow(src);

|

||||

}

|

||||

};

|

||||

}

|

||||

|

||||

Readable.prototype.unpipe = function (dest) {

|

||||

var state = this._readableState;

|

||||

|

||||

// if we're not piping anywhere, then do nothing.

|

||||

if (state.pipesCount === 0) return this;

|

||||

|

||||

// just one destination. most common case.

|

||||

if (state.pipesCount === 1) {

|

||||

// passed in one, but it's not the right one.

|

||||

if (dest && dest !== state.pipes) return this;

|

||||

|

||||

if (!dest) dest = state.pipes;

|

||||

|

||||

// got a match.

|

||||

state.pipes = null;

|

||||

state.pipesCount = 0;

|

||||

state.flowing = false;

|

||||

if (dest) dest.emit('unpipe', this);

|

||||

return this;

|

||||

}

|

||||

|

||||

// slow case. multiple pipe destinations.

|

||||

|

||||

if (!dest) {

|

||||

// remove all.

|

||||

var dests = state.pipes;

|

||||

var len = state.pipesCount;

|

||||

state.pipes = null;

|

||||

state.pipesCount = 0;

|

||||

state.flowing = false;

|

||||

|

||||

for (var _i = 0; _i < len; _i++) {

|

||||

dests[_i].emit('unpipe', this);

|

||||

}return this;

|

||||

}

|

||||

|

||||

// try to find the right one.

|

||||

var i = indexOf(state.pipes, dest);

|

||||

if (i === -1) return this;

|

||||

|

||||

state.pipes.splice(i, 1);

|

||||

state.pipesCount -= 1;

|

||||

if (state.pipesCount === 1) state.pipes = state.pipes[0];

|

||||

|

||||

dest.emit('unpipe', this);

|

||||

|

||||

return this;

|

||||

};

|

||||

|

||||

// set up data events if they are asked for

|

||||

// Ensure readable listeners eventually get something

|

||||

Readable.prototype.on = function (ev, fn) {

|

||||

var res = Stream.prototype.on.call(this, ev, fn);

|

||||

|

||||

// If listening to data, and it has not explicitly been paused,

|

||||

// then call resume to start the flow of data on the next tick.

|

||||

if (ev === 'data' && false !== this._readableState.flowing) {

|

||||

this.resume();

|

||||

}

|

||||

|

||||

if (ev === 'readable' && !this._readableState.endEmitted) {

|

||||

var state = this._readableState;

|

||||

if (!state.readableListening) {

|

||||

state.readableListening = true;

|

||||

state.emittedReadable = false;

|

||||

state.needReadable = true;

|

||||

if (!state.reading) {

|

||||

processNextTick(nReadingNextTick, this);

|

||||

} else if (state.length) {

|

||||

emitReadable(this, state);

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

return res;

|

||||

};

|

||||

Readable.prototype.addListener = Readable.prototype.on;

|

||||

|

||||

function nReadingNextTick(self) {

|

||||

debug('readable nexttick read 0');

|

||||

self.read(0);

|

||||

}

|

||||

|

||||

// pause() and resume() are remnants of the legacy readable stream API

|

||||

// If the user uses them, then switch into old mode.

|

||||

Readable.prototype.resume = function () {

|

||||

var state = this._readableState;

|

||||

if (!state.flowing) {

|

||||

debug('resume');

|

||||

state.flowing = true;

|

||||

resume(this, state);

|

||||

}

|

||||

return this;

|

||||

};

|

||||

|

||||

function resume(stream, state) {

|

||||

if (!state.resumeScheduled) {

|

||||

state.resumeScheduled = true;

|

||||

processNextTick(resume_, stream, state);

|

||||

}

|

||||

}

|

||||

|

||||

function resume_(stream, state) {

|

||||

if (!state.reading) {

|

||||

debug('resume read 0');

|

||||

stream.read(0);

|

||||

}

|

||||

|

||||

state.resumeScheduled = false;

|

||||

stream.emit('resume');

|

||||

flow(stream);